加入 Mergeek 福利群

扫码添加小助手,精彩福利不错过!

若不方便扫码,请在 Mergeek 公众号,回复 群 即可加入

- 精品限免

- 早鸟优惠

- 众测送码

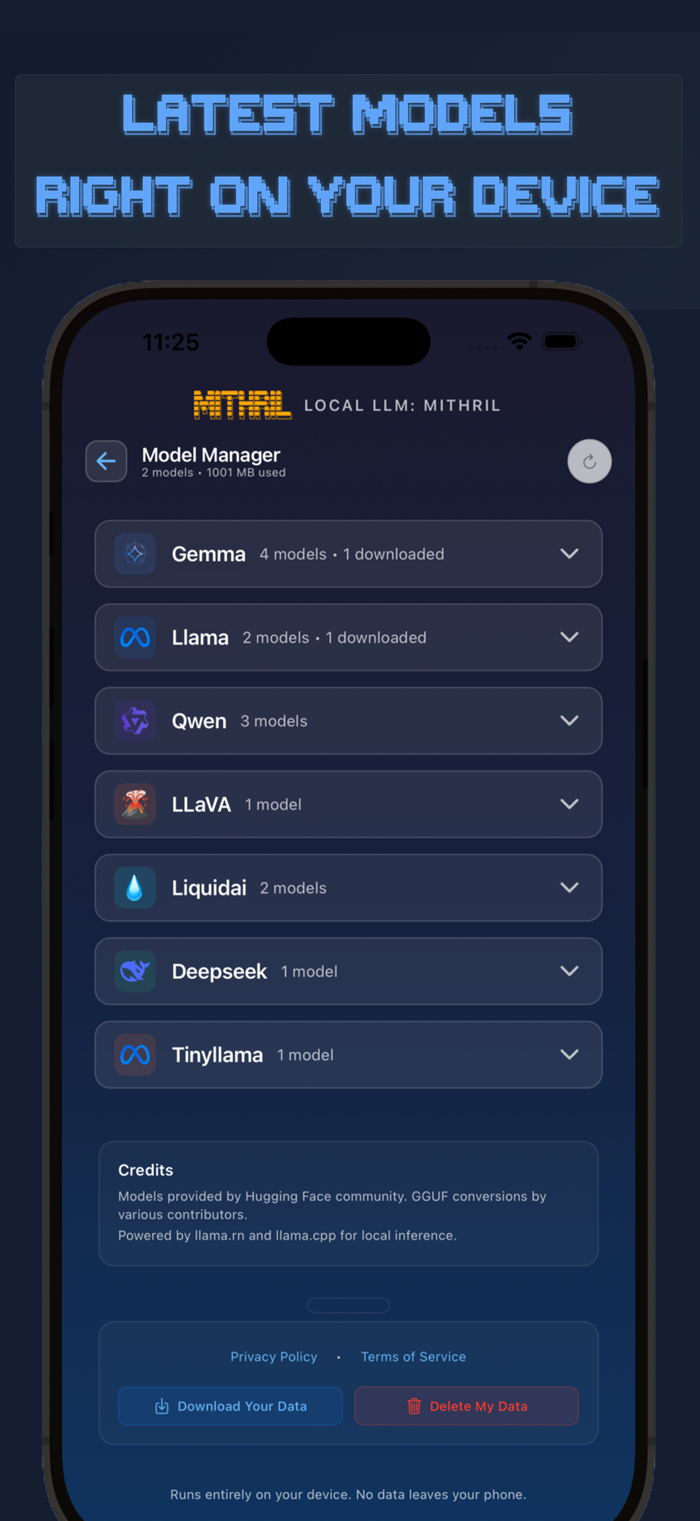

Local LLM: MITHRIL

在iPhone上本地运行量化大语言模型,具备多项技术特性

分享

分享

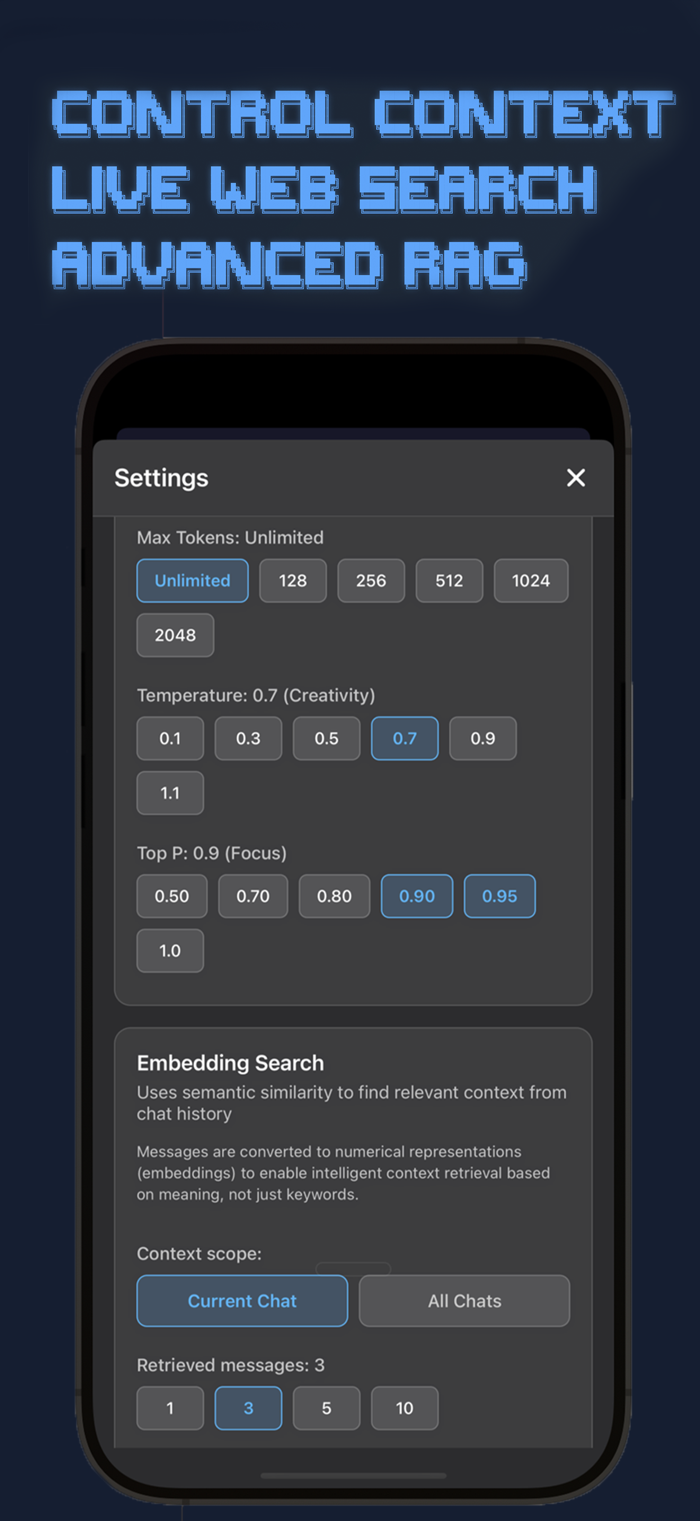

无需云端或网络,直接在你的iPhone上运行量化大语言模型。获取适用于移动硬件的先进量化人工智能模型,下载GGUF格式的模型。它提供了一个完整的模型套件,包括Llama 3.2、Gemma 3、Qwen 2.5、LLaVA 1.5/1.6,并直接集成了Hugging Face。技术特性包括GGML/llama.cpp推理引擎、Metal GPU加速、动态上下文窗口管理、RAG、实时流以及带有向量搜索的SQLite对话存储。

用户评价

立即分享产品体验

你的真实体验,为其他用户提供宝贵参考

💎 分享获得宝石

【分享体验 · 获得宝石 · 增加抽奖机会】

将你的产品体验分享给更多人,获得更多宝石奖励!

💎 宝石奖励

每当有用户点击你分享的体验链接并点赞"对我有用",你将获得:

🔗 如何分享

复制下方专属链接,分享到社交媒体、群聊或好友:

💡 小贴士

分享时可以添加你的个人推荐语,让更多人了解这款产品的优点!

示例分享文案:

"推荐一款我最近体验过的应用,界面设计很精美,功能也很实用。有兴趣的朋友可以看看我的详细体验评价~"

领取结果